A major outage at Cloudflare disrupted Internet connectivity on February 20, 2026. The incident affected customers using the Bring Your Own IP (BYOIP) service. Consequently, websites and applications became unreachable. The outage lasted 6 hours and 7 minutes. Cloudflare confirmed that this issue was caused by an internal software bug and not by cyberattacks.

What Happened During the Cloudflare Outage

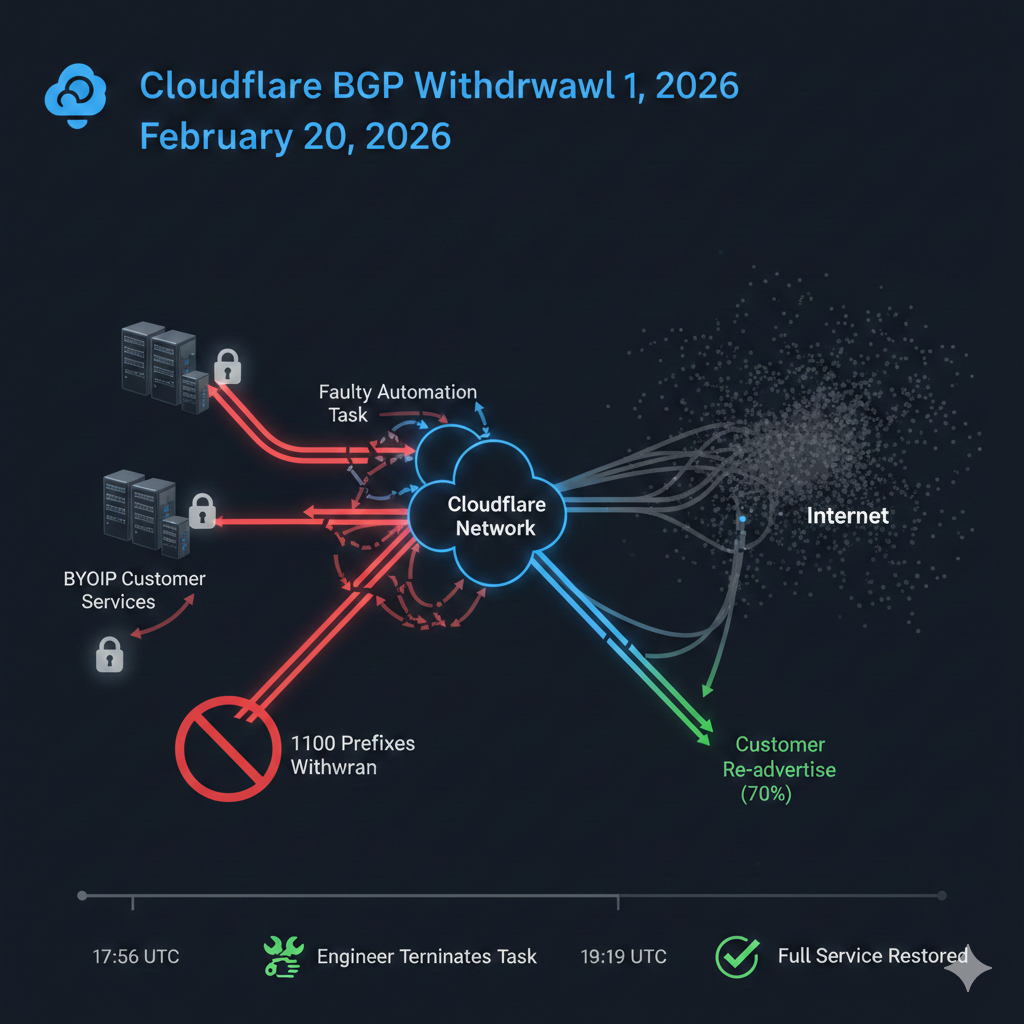

The outage began at 17:48 UTC, when an automated system mistakenly removed active IP routes. Since these routes help Internet traffic reach the correct destination, their withdrawal caused failures across multiple services. As a result, users experienced:

- Website connection failures

- Application timeouts

- Increased network latency

- Routing instability

Meanwhile, Cloudflare engineers quickly investigated the problem and identified the faulty process. Therefore, they stopped the automation before additional customers were affected.

Read more software news: https://tthagaval.com/category/software-news

Scope and Impact of the Incident

Cloudflare reported that about 1,100 IP prefixes were withdrawn, representing roughly 25% of BYOIP prefixes. Consequently, several Cloudflare services experienced disruption.

Affected services included:

- Content Delivery Network (CDN)

- Magic Transit

- Spectrum

- Dedicated Egress services

Additionally, Cloudflare’s public DNS resolver website (1.1.1.1) displayed temporary access errors. Nevertheless, DNS resolution itself continued working normally.

Root Cause: Internal API Configuration Bug

The root cause was a bug in Cloudflare’s Addressing API automation system. Specifically, a cleanup task incorrectly marked active IP prefixes for deletion. As a result, the system began removing valid IP routes from the network.

Fortunately, Cloudflare engineers identified the bug quickly and stopped the faulty task. Afterwards, they restored the withdrawn routes and service configurations.

Official source: Cloudflare Incident Report

Recovery Timeline

Cloudflare restored most services within hours. The company completed full recovery at 23:03 UTC. Key timeline events included:

- 17:48 UTC — Outage started

- 18:18 UTC — Incident declared

- 18:46 UTC — Engineers stopped faulty system

- 19:19 UTC — Customers began restoring services

- 23:03 UTC — Full recovery completed

Meanwhile, some customers required additional support because their service bindings were also removed. Consequently, engineers had to reapply global configurations.

How Cloudflare Fixed the Issue

Cloudflare implemented several improvements to prevent future outages. These include:

- Stronger API validation to prevent malformed queries

- Safer deployment systems with health-monitored rollouts

- Automatic rollback protection to restore services quickly

- Better monitoring and alert systems for abnormal changes

In addition, Cloudflare continues its reliability program called Code Orange: Fail Small, which aims to minimize risks from future automation errors.

Industry Impact and Lessons

This outage highlights the importance of reliable Internet infrastructure. Even small configuration errors can cause global disruption. Therefore, companies must implement strong monitoring, redundancy, and rollback systems.

Cloudflare acknowledged the mistake and apologized to customers. Moreover, the incident shows why careful testing and staged deployments are crucial for global networks.

Related: Claude Code Security Vulnerability Detection

Reference: Cloudflare’s official blog post‑mortem on the February 20, 2026 outage